Information Extraction

The system processes uploaded PDF files and extracts relevant content using an AI-based backend. Instead of presenting the full raw text, it identifies key information and structures it into a more understandable format.

AI Prototype · Accessibility · Human-Centered Design

An AI-supported PDF reader designed to make written content more accessible. The system extracts key information from documents and converts them into spoken output, helping visually impaired users navigate and understand complex texts more easily.

Overview

Smartphones and digital documents are central to everyday life — but not equally accessible to everyone. For visually impaired users, reading PDFs can be slow, frustrating or even impossible depending on formatting. This project explores how artificial intelligence can bridge that gap by transforming static documents into interactive, voice-based experiences. Instead of manually navigating through dense text, users can receive summarized insights and listen to content in a more natural way. The goal was not only to improve accessibility, but also to rethink how information can be consumed beyond visual interfaces.

The project was developed as a human-machine communication prototype and investigates how a device can guide, interrupt and reinforce habits through simple but emotionally clear interactions.

Functions

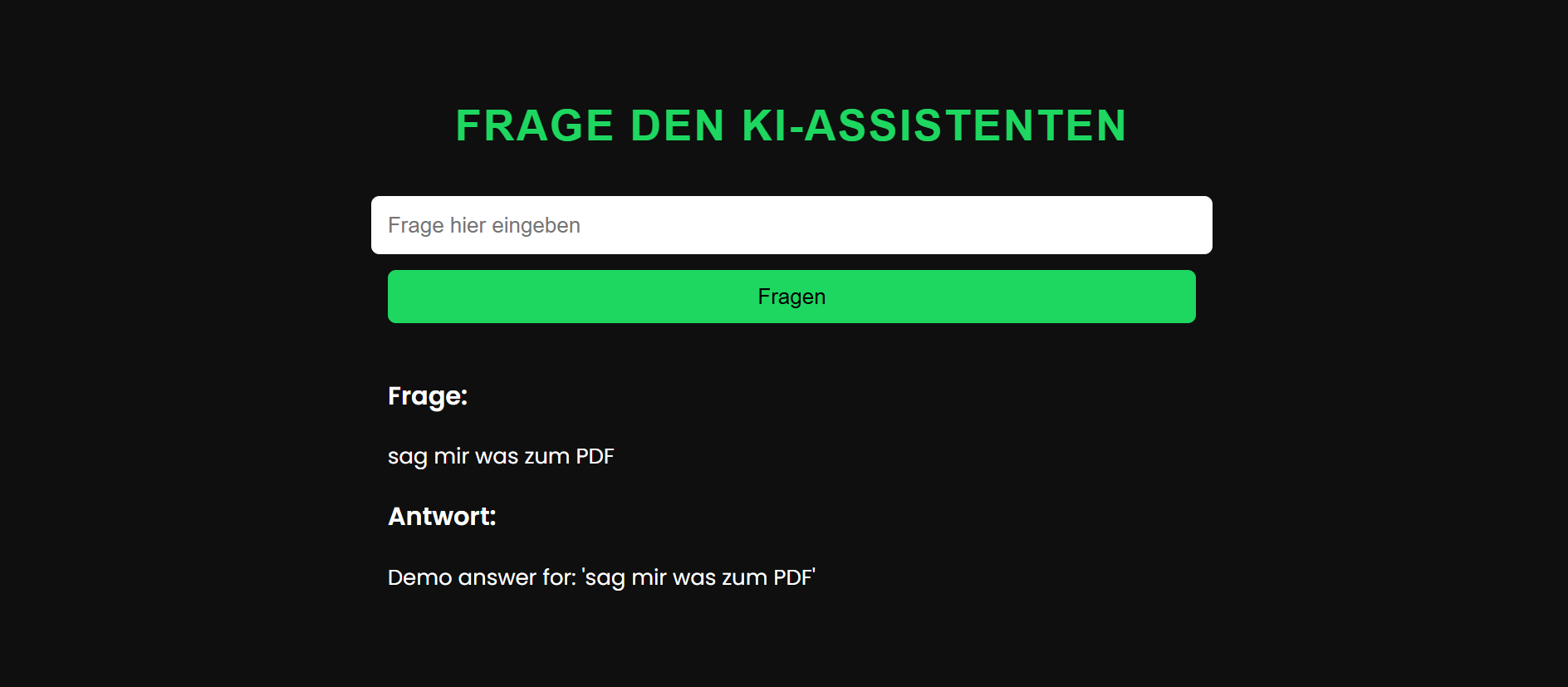

The system processes uploaded PDF files and extracts relevant content using an AI-based backend. Instead of presenting the full raw text, it identifies key information and structures it into a more understandable format.

Users can listen to the content through an integrated audio output. This allows documents to be consumed without relying on visual interaction, supporting accessibility and multitasking.

INTERACTION / USER FLOW

Use Cases

The primary focus of the project was to support blind or visually impaired users by enabling access to written information through audio.

The system can also be used to quickly understand academic texts or long documents without reading everything in detail.

From articles to reports, the tool helps users consume information more efficiently in situations where reading is not practical.

Prototype

This project was developed as a university prototype by a team of six. The focus was on building a functional system that demonstrates how AI can enhance accessibility in document-based workflows. The backend processes PDF files and communicates with an AI service via an API key to analyze and transform the content. The system combines document parsing, AI-based text processing and audio output into a single interaction flow.

Group Work

I took on the role of team lead, coordinating the development process and supporting both concept and technical implementation. In addition to organizing the team workflow, I contributed to the programming and integration of the system, helping to connect the different components into a working prototype.

INTENT

The project explores how artificial intelligence can make digital content more inclusive. Rather than designing a purely visual interface, the focus was on creating an alternative interaction model based on listening and simplified understanding. It highlights the potential of AI not just as a tool for automation, but as a means to enable access and reduce barriers in everyday digital experiences.